Introduction

A premium price almost always ensures premium performance hands down. It is true about Graphics Cards, and it is true about every single consumer product in the market.

If you are reading this article, you will likely be one of three kinds of people. Geeks who are genuinely curious about how high a GPU can be priced; Gaming enthusiasts who want their hands on top-of-the-line graphics cards, and AI or creative professionals who need high-end graphics as their work prerequisite and have deep pockets.

Disclaimer: We have neither used nor tested any of these super-elite cards enlisted here. Like many of you out there, we cannot afford them.

What we have actually tried to achieve in this article is to provide you with, after hours of search, the most relevant information about the most expensive graphic cards. If their mouth-watering prices don’t make an impression, and you are serious about buying one for your needs, the affiliate links can take you directly to Amazon.

We have added a ‘Graphics Cards Lingo’ so you can better understand the specifications of each card. Skip the struggle to read it if you already know enough about GPUs.

Graphics Cards Lingo:

Reference Card Vs. Non-Reference cards:

As far as the actual design of the GPU is concerned, there are two meaningful competitors in the market: NVIDIA and AMD. The chips they design are called reference cards.

But what about the cards by MSI, ASUS, and GIGABYTE?

The cards they design are upgraded versions of basic chips by Nvidia, or AMD, by adding custom coolers, and software packages for overclocking, and by tweaking the design of the card to make it look aesthetically appealing or make it robust. These cards are called non-reference GPUs.

Base clock speed and boosted clock speed:

This one is quite self-explanatory. Base clock speed is the lowest clock speed the card runs at, whereas the boost clock speed is the ultimate limit it can possibly achieve while running at full throttle. Many companies push the boosted clock speed limit to maximize performance in a process called overclocking.

CUDA or Stream Cores:

GPUs, like CPUs, have cores at their heart to do graphical calculations. However, unlike CPUs which have limited powerful cores, they have thousands of cores to process a multitude of parallel calculations.

The cores in an Nvidia Chip are called CUDA cores which tend to be bigger and more complex and high frequency than Stream Cores by AMD. It is pointless to compare AMD Stream cores count with Nvidia CUDA cores count, for they have a completely different built-up.

Memory size, types, and clock speed:

Dedicated GPUs use VRAM which ranges from as low as 2GB for meaningful gaming to as high as 12 GB for playing AAA titled games. Having a large memory size is always an advantage to squeeze out the best performance from your card.

For the most part, high-end GPUs come with GDDR5 and GDDR6 type VRAM for optimal performance. The latter(GDDR6) has an upper hand in power-efficiency and overall performance.

Inextricably linked with the memory type is the clock speed of the memory which is simply the transfer speed between memory and GPU. The higher the clock speed, the better the card performance.

Thermal Design Power (TDP):

Thermal Design power gives you a hint of power drawn by the GPU and the energy dissipated while the card is running at full capacity. It dictates the capacity of the power supply unit (PSU) of your system and also the cooling power you need to keep things under control.

Roughly speaking, a card with 250 W TDP needs a 500 Watt PSU and a robust cooling mechanism with a higher no of large-sized fans.

Cooling mechanism:

The number and size of fans, along with the form factor of the graphic card determines the cooling power of a GPU card. The higher the number, the more the size, and the larger the form factor of GPUs, the higher their cooling power. It is as simple as this.

Throttling:

GPU throttling, or its laggard performance, springs from several issues. However, the most common is thermal throttling which is due to inefficient heat dissipation when the card is pushed to its limits. The fans just don’t keep up with the heat produced by the card and it operates at dangerously high temperatures. GPU has to dump performance to bring temperature under control.

***Disclosure: As an Amazon Associate I earn from Qualifying Purchases, at no additional cost.

Top 7 GPUs To Buy In 2022

7 Most Expensive GPUs

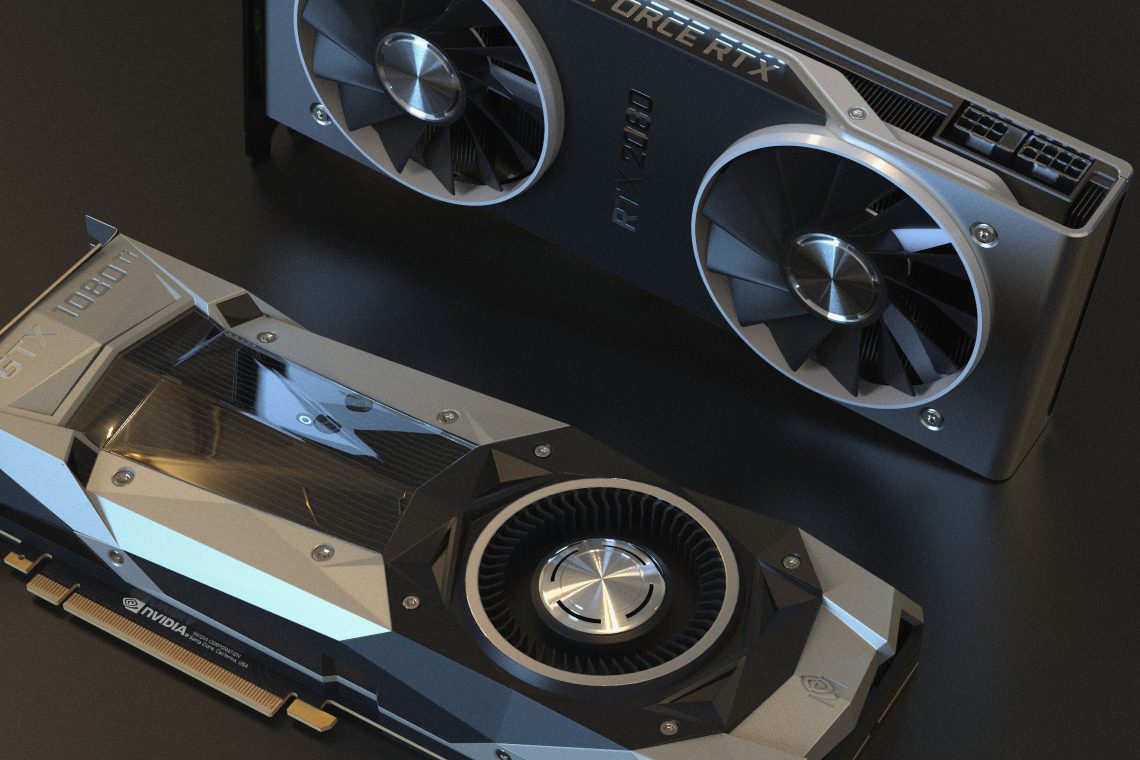

Asus ROG STRIX GeForce RTX 2080TI Overclocked 11G GDDR6 HDMI DP 1.4 USB Type-C Gaming Graphics Card (ROG-STRIX-RTX-2080TI-O11G)

Graphics Engine: NVIDIA GeForce RTX 2080 Ti | Architecture: Nvidia Turing | Cuda Cores: 4352 CUDA Cores | Power Connectors: 2 x 8-pin | Memory Size and Memory Clock: GDDR6 11 GB and 14000 MHz | Base Clock Speed & Boosted Clock Speed: 1350 MHz–1650 MHz | Interface: HDMI Output, DisplayPort, HDCP Support USB Type-C | Power consumption: 260 W

Republic Of Gamer (ROG) is a brand dedicated to fierce gamers which the parent company, ASUS, introduced in 2006 to woo fastly-rising gaming community. It has largely been successful to capture a sizable share of the gaming market.

STRIX GeForce RTX 2080Ti is a flagship product by the company. Powered by the fastest, most robust Nvidia chip, it can literally chew down every graphic-taxing task you throw at it. The powerful performance is matched by the premium price of the card.

It is a non-reference GPU, as ASUS has built upon the parent chip by Nvidia: GeForce RTX 2080Ti. The reference/parent chip itself belongs to the elite line of products with the latest design based on Turing Architecture. It is VR and Ai ready and can run games at 7680 x 4320 resolution at 60 FPS. With ray-tracing enabled, users are bound to experience realistic effects while gaming.

A high no of Cuda core(4352) and one of the largest, and fastest, VRAM (11 GB DDR6) works in the advantage of the GPU. Without it, rendering a 4k display could not have been possible.

Asus has overclocked the card to boost its performance by 10%. The company has engineered the fans (and the form factor) to enhance cooling, minimize the noise of the card, and obviously to avoid thermal throttling. When running at a safe temperature, the fans automatically turn off to get you a noise-free gaming experience. (known as 0db mode)

Being a powerful card, it draws enormous power of roughly 300 watts, therefore needs a large power supply unit. It connects to the PCB via 2 x 8-pin PCIe connectors and supports multiple interfaces.

To sum up, it is a premium gaming card with performance challenges by a few.

MSI GEFORCE GTX1080 TI GAMING X 11G

$259.99 in stock

Graphic Engine: NVIDIA GeForce GTX 1080 Ti | Architecture: Nvidia Pascal | Cuda Cores : 3584 CUDA Cores | Power Connectors: 2 x 8-pin | Memory Size and Memory Clock: GDDR5X 11 GB and 11200 MHz | Base Clock Speed & Boosted Clock Speed: 1645 MHz–1759 MHz | Interface: HDMI Output , DisplayPort, HDCP Support | Power consumption: 300 Watt

ZOTAC GeForce GTX 1080 Ti AMP Extreme is slightly less expensive and significantly less powerful than the ASUS ROG STRIX GeForce RTX 2080TI GPU we discussed above.

To start with, its Pascal architecture, a predecessor of Turing and Volta, is more than adequate for playing the latest games in high settings. But, there is a catch. Unfortunately, GTX chips designed on Pascal architecture are not equipped with RTX and Tensor cores. As a result, it gets you neither the realistic effects as high as that of RTX GPUs nor AI functionality for machine learning projects.

Despite the same RAM size (11 GB) as that of ROG STRIX GeForce RTX 2080TI, it is relatively less power-efficient and has a laggard performance in RAM-intensive tasks. GDDR5 RAM type is the reason, which is an immediate predecessor to GDDR6.

This GPU is overclocked, and the company provides software with the card to allow users to tweak it to their own preferences. A comparatively high base and boosted clock speed is its definite advantage over competitors.

Speaking of cooling power, its three large fans and a large heat sink ensure quiet operation. Even when pushed to the limits, the card hardly shows any sign of throttling. However, it is not true for extended periods of usage at high settings.

The company has encased it in a sturdy frame to minimize the chances of physical damage. It needs a large power supply unit to operate and connects via fast 2 x 8 PCIe pins.

Zotac GeForce GTX 1080 Ti is dedicatedly designed for gamers who prefer dependable cooling and boosted processing power over all other features.

NVIDIA Quadro P6000 - Graphics card - Quadro P6000 - 24 GB GDDR5 - PCIe 3.0 x16 - DVI, 4 x DisplayPort

2 used from $1,299.99

Graphics Engine: NVIDIA GeForce GTX 1080 Ti | Architecture: Nvidia Pascal | Cuda Cores : 3584 CUDA Cores | Power Connectors: 2 x 8-pin | Memory Size and Memory Clock: GDDR5X 11 GB and 11200 MHz | Base Clock Speed & Boosted Clock Speed: 1600 MHz–1900 MHz | Interface: HDMI Output , DisplayPort, HDCP Support | Power consumption: 279 Watt

MSI GEFORCE GTX1080 TI GAMING X has a lot in common with the ZOTAC GeForce GTX 1080 Ti AMP Extreme except for the price as the former is relatively expensive.

As they both share the same reference chip by NVIDIA, GeForce GTX 1080 Ti, the similarities come as no surprise. However, MSI overclock mode can seriously make the card take a giant leap in performance.

There is no need to emphasize the importance of 11 GB VRAM, or the higher no of Cuda Cores, as we have just discussed their significance in the review above. One point that we might have missed is that GeForce GTX 1080 Ti has whooping 30 percent gains over GeForce GTX 1080.

MSI delivers this card with software to tweak the card as per user preference. Though, MSI Overclocking is enough to experience fast performance, manual overclocking squeeze out the best from the GPU.

It is VR ready and can play AAA title games at ultra resolution (4K) with modest FPS. As per the popular GPU benchmarks, MSI Gaming 11G stands head and shoulders above other cards that run on the same Nvidia technology.

This card is encased in a thick frame with two fans at the top and a heat-dissipating nickel-plated copper base plate at the bottom. Both the fans are large enough to keep the temperature under control with an equally large, and thick, heat sink to efficiently expel the heat. All the components are screwed tightly into one brick of a size graphic card.

Aesthetically, Its design is consistent with the ‘Dragon Theme’ of the MSI of Red and Black color combination.

MSI GEFORCE GTX1080 TI GAMING X 11G is another high-performance card for gamers with a mouth-watering price tag.

XFX AMD Radeon VII 16GB HBM2, 1750 MHz Boost, 1801 MHz Peak, 3xDP 1xHDMI Pci-E 3.0

Graphic Engine: NVIDIA GeForce RTX 2080 Ti | Architecture: Nvidia Turing | Cuda Cores : 4352 CUDA Cores | Power Connectors: 2 x 8-pin | Memory Size and Memory Clock: GDDR6 11 GB and 1635 MHz | Base Clock Speed & Boosted Clock Speed: 1350 MHz–1650 MHz | Interface: HDMI Output , DisplayPort, HDCP Support USB Type-C | Power consumption: 260 W

NVIDIA GeForce RTX 2080 Ti Founders will make you rob a bank to pay for it, just as all other cards in our list. Seriously, Folks, you won’t want to spend a fortune on it unless your mind is set on serious gaming.

Featuring the latest Turing Architecture design, this GPU is a break from its predecessor’s design. The new architecture allows it to fit more cores in a limited space, attesting to Moore’s law, and therefore it is capable of delivering high raw power. Apart from standard Cuda cores, it has RTX(Ray Tracing cores for realistic gameplay) and Tensor cores (For deep learning projects).

The GDDR6 11 GB VRAM is more than enough to run AAA games at 4k or even at more ultra settings (at 8k). A definite advantage you get with this GPU is the high clock speed of VRAM to ensure fast data transfer.

It is common knowledge in the gaming community that Nvidia cards are more suitable for Live streaming than affordable AMD GPUs. With GTX 2080 Ti, the company has widened the gap. It comes with a dedicated hardware encoder to ensure high quality while gaming and streaming at the same time.

The GPU may not have the best cooling system incorporated, but is surely enough to maintain a desirable temperature while running at full throttle. However, on the acoustic side, it has a major downside: the fans don’t run noise-free and operate at relatively high speed to maintain even minimum performance level.

It comes with HDMI Output, DisplayPort, and HDCP Support, but the extraordinary output is the USB-C type.

Though expensive, this GPU has its appeal to all kinds of users who have a heavy dependence on graphic rendering. Be it gaming, live streaming, or AI learning, this well-rounded chip is powerful enough to cater to all kinds of needs.

NVIDIA Titan RTX Graphics Card

$1,569.15 in stock

4 used from $1,299.99

Graphics Engine: NVIDIA Quadro P6000 | Architecture: NVidia Pascal | Cuda Cores : 3840 CUDA Cores | Power Connectors: 1×6-pin &1 x 8-pin | Memory Size and Memory Clock: GDDR5 24 GB and 9008 MHz | Base Clock Speed & Boosted Clock Speed: 1506 MHz–1645 MHz | Interface: HDMI Output , DisplayPort | Power consumption: 250W

Nvidia Quadro series features graphic cards not fit for regular consumer’s needs. They are dedicatedly designed for large corporations, and therefore, have a high price-tag attached to them. (Large content production houses, Oil and resource exploration firms, and AI-based companies)

NVIDIA Quadro P6000 comes with 3840 CUDA Cores and you would be right to object it. Why? One gets a whooping high 4352 CUDA Cores in NVIDIA GeForce RTX 2080 Ti which is significantly lower in price than Quadro. Also, It is based on Pascal architecture, the predecessor of the latest Turing architecture in GeForce RTX 2080 Ti, so why is there a phenomenal difference in price?

The answer lies in large memory size and high memory speed with the advantage of upgrading up to 32GB. How? You can connect it with two Pascal-based Quadro GP100’s via NVIDIA NVLink technology to take full advantage of combined HBM2 ultra-high bandwidth memory.

Also, it comes with special drivers to aid professionals in performing high demanding tasks. E.g. photorealistic design, VR simulation, and 3D modeling. But that alone didn’t justify the price.

This card justifies the mind-boggling price by offering the best reliability, lowest power consumption, and Lowest heat output in these cards. Consumers and large-scale companies are different in their approach, and Nvidea knows well of it. The former can compromise long-term reliability if they don’t have to pay extra bucks for it, whereas, the latter(companies) cannot compromise long-term performance even if they have to pay a stupendously high price for it.

ZOTAC Gaming GeForce RTX 3060 Twin Edge OC 12GB GDDR6 192-bit 15 Gbps PCIE 4.0 Graphics Card, IceStorm 2.0 Cooling, Active Fan Control, Freeze Fan Stop ZT-A30600H-10M

$309.99 in stock

5 used from $285.19

Graphics Engine: AMD Radeon VII | Architecture: 7nm | Stream Cores: 3,840 | Power Connectors: 2 x 8-pinOutputs | Memory Size and Memory Clock: 16GB of VRAM, 1000 MHZ | Base Clock Speed & Boosted Clock Speed: 1400-1800 MHZ | Interface: 3 x DisplayPort 1.4, 1 x HDMI 2.0 | Power consumption: 300 Watts

AMD Radeon VII was meant to be ahead to head competitor of Nvidia RTX 2080 cards, and upon its release, it was well-received by the gaming community. It did manage to beat its Nvidia counterpart in some areas—memory speed and cooling power—but was unable to take an upper hand.

Radeon VII has no Tensor or RTX cores for Machine learning projects and photorealistic rendering respectively. It is undoubtedly the key reason that users didn’t prefer this card over RTX 2080. One other reason was the unsuitability of this card for live streaming.

16 GB of VRAM is a massive amount of memory, the likes of which we see in multi-thousand dollars NVIDIA Titan cards. But size is one thing, the fact that it is an HBM2 type memory makes this card extraordinary. It can transfer data at ultra-fast (1 TB/s) speed.

Gamers and creative professionals can squeeze out the best from this card. At high settings(4K) and solid frame rates, all cards use high memory. In the absence of VRAM, the cards are compelled to use slow system memory, thus hampering their speed in the process. Here, Radeon VII can take advantage of its high memory size to avoid throttling.

Only second to memory, the cooling power of this card is the top feature. It is encased in a sturdy frame with three fans and a large heat sink. Despite 300 watt power, it draws with its 2, 8 pin connectors, it runs at manageable temperature thanks to its effective cooling and power-efficient stream cores.

All in all, this card can benefit only those creative professionals and high-end gamers who need to work with large files through the course of their day.

NVIDIA GeForce RTX 3060 Ti Founders Edition 8GB GDDR6 PCI Express 4.0 Graphics Card

$389.00 in stock

8 used from $329.95

Graphic Engine: Nvidia Titan RTX | Architecture: Turing | CUDA Cores: 4608 | Power Connectors: 2-8pin | Memory Size and Memory Clock: 24 GB of DDR6 memory, 7000 MHZ | Base Clock Speed & Boosted Clock Speed: 1300-1700 MHz | Interface: HDMI, DVI, Display port | Power consumption: 280 W

Titan series features one of the most expensive cards by Nvidia and is only second to Quadro cards when it comes to mouth-watering price. Both these series, Titan and Quadro, are not designed for regular consumers but for high-end professionals with deep pockets. To mention a few, it caters to the needs of AI researchers, deep learning developers, data scientists, content creators, and artists.

This card comes with 576 Tensor and 72 RTX cores to back its claim that it is a card for the budding Artificial Intelligence Industry. To remind you, dedicated tensor cores significantly improve GPU performance while performing tasks related to machine learning, whereas RTX cores have their role to play in rendering photorealistic graphics.

Next to cores, it has a huge memory size of 24 GB—equal to that of Quadro P6000. The speed is fast enough for high-performance needs. Heavy-duty 3D rendering software and Machine learning algo can bank on fast memory speed and large memory size.

Lastly, it connects via 2, 8-pin connectors to draw roughly 280 Watts from the motherboard so you will need a large power supply unit to run this card on your PC. The cooling power is just Ok and could have been better with 3 fan design.